The lack of real-world practitioners influencing strategy and direction has had a detrimental effect. Standard techniques such as customer-driven innovation have never taken deep roots in the world of web analytics. Most progress has been driven by possibility-driven innovation — as in, “What else is possible for us to do with the data we capture? Let’s innovate based on that.”

Visiting a website is a radically different proposition if you look from the lens of data collection. […] The website knows every “aisle” you walked down, everything you touched, how long you stayed reading each “label”, everything you put in your cart and then discarded, and lots and lots more.

We have clicks, we have pages, we have time on site, we have paths, we have promotions, we have abandonment rates, and more. It is important to realize that we are missing a critical facet to all these pieces of data […] is the why.

Combining the what with the why can be exponentially powerful

Methods to collect customer qualitative data

- Lab usability testing

- Follow-me-homes

- Testing

- Unstructured remote conversations

- Surveying

Trinity strategy – get actionable insights

- Behavior analysis – infer customer intent

- Outcome analysis – What happened, what was the outcome?

- Experience analysis – Why does this all happen?

Why does your website exist?

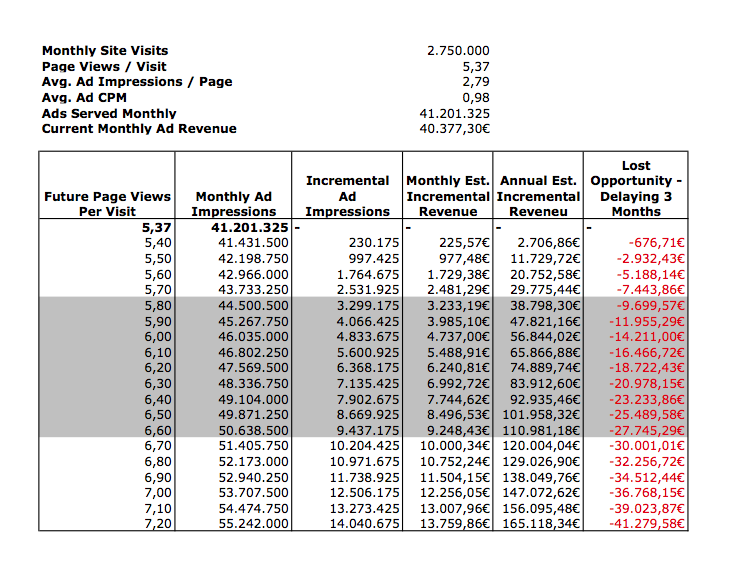

After you have an answer to Why does your website exist?, it is imperative to investigate how your decision-making platform will capture the data that will help you understand the outcomes and whether your website is successful beyond simply attracting traffic and serving up pages.

My recommendation is to have a web research team and to have the team members, researchers, sit with and work alongside the web analysis team.

I recommend that you have at least one continuous listening program in place. Usually the most effective mechanism for continuous listening, benchmarking, and trending is surveying.

User research

From the most high-level perspective, user research is the science of observing adnd monitoring how we (and our customers) interact with everday things such as websites or software or hardware, and to then draw conclusions about how to improve those customer experiences.

Lab usability testing

- Best for optimizing UI design and work flows, understanding the voice of customer, and understanding what customers really do

Preparing the test

- Identify critical taks

- Create scenarios for each task

- For each scenario, establish a goal

- Identify the desired user group

- Create a compensation structure for the participants

- Hire the right people

- Do pre-tests internally

Conducting the Test

- Brief the participants

- Start with a “thinking aloud” exercise

- Have participants read the tasks aloud to ensure that they read the whole thing

- Carefully observe their verbal and nonverbal clues

- The moderator can ask the participant follow-up questions

- Thank the participants and pay them directly

Analyzing the data

- Hold a debriefing session

- Note the trends and patterns

- Do a deep dive analysis to identify the root causes of failures based on actual observations

- Make recommendations to fix the problems and prioritize them

Don’t forget to measure success post-implementation. So we spent all the money on testing; what was the outcome? Did we make more money? Are customers satisfied? Do we have lower abandonment rates? The only way to keep funding going is to show a consistent track record of success that affects either the bottom line or customer satisfaction.

Heuristic evaluation

Heuristic evaluations follow a set of well-established rules in web design and in how website visitors experience websites and interact with them.

Heuristic evaluations can also be done in groups; peopel with key skills all attempt to mimic the customer experience under the stewardship of the user researcher. The goal is to attempt to complete tasks on the website as a customer would.

Heuristic evaluations are at their best wehen used to identify what parts of the customer experience are most broken on your website.

Conducting:

1. Understand the core tasks

2. Establish success benchmarks

3. Walk through each task and make notes of key findings

4. Make nots of best-practice rule violations

5. Create reports

6. Categorize recommendations & prioritize

http://www.usereffect.com/topic/25-point-website-usability-checklist

Follow-Me-Home studies

perhaps the best way to get as close to the customer’s “native” environment as possible

Preparation:

1. Set customer expectation clearly

2. Assign proper roles up front (moderator, note taker, video person, etc.)

Conducting:

1. Spend 80% observing

2. Let them do the tasks like the would normally do

3. Don’t teach them

4. The moderator can ask clarifying questions

Surveys

- optimal method for collecting feedback from a very large number of customers relatively cheap and quickly.

- Website surveys

- Site-level surveys

- Usually about the experience of the site and get more context about the customer’s visit

- Page-level survey

- Performance of individual pages

- Shorter than site-level surveys

- Collect satisfaction rates or task-completion rates

- Post-visit survey

- Rating of the ordering process, delivery, etc.

Preparing a survey:

- Understand the core tasks

- Analyze clickstream to understand main holes you want to answer

- Learn about questionnaire design

Conducting:

- Implement the survey correctly

- Incoperate cookie data, e.g. only show people who haven’t answered the survey

- Walk through he customer experience yurself

- Check response rates daily or weekly to pick up problems

Tips:

- Calculate correlations between answers -> helps you to understand which actions can be implemented successfully

- Ask open-ended questions

- Critical components of success

- Focus on customer centricity

- include customer and look beyond click-stream

- How is the website doing in terms of delivering for the customer?

- Solving for the customer means solving for long-term success

Trinity

- Primary purpose: Why are you here?

- Task completion rate: Were you able to complete your task?

- Content and structural gaps: How can we improve your experience?

- Customer satisfaction: Did we wow you today?

- Answer business questions

- They are open-ended & on a higher level

- Look outside your WA tool

- Follow the 90/10 rule

- Right organizational structure